The GigaSECURE Security Delivery Platform Exports More Details in IPFIX

For over two years, we at Plixer have been touting how Gigamon offers a solution that exports more details in IPFIX than any other vendor. Back in 2015, we discussed why traffic visibility improves security analytics. Next, when Gigamon stepped it up and started exporting DNS information in their flow export, we wrote about how to use their appliances to gain visibility into Akamai and Amazon AWS traffic. For visual folks, we posted a video on YouTube:

Click image to view “Gigamon + Plixer – Leveraging the Power of Network Visibility for Best-in-Class Security.”

In the last year, we have come to rely heavily on our in-house GigaSECURE® Security Delivery Platform to help us manage, secure and understand what is happening across our own network. When your team is ready to start collecting, reporting and analyzing the rich flows and metadata that your GigaSECURE Security Delivery Platform exports, there are several criteria to keep in mind when choosing a NetFlow and IPFIX traffic analytics system. A partial list includes:

- Scale: If your organization plans to export greater than 40K flows per second, most vendors will claim that their solutions can easily handle this volume. The most important issue to consider is not the rate of data collection, but instead, the speed of reporting. Getting the flows into the database is the easy part; quickly pulling them out to provide a trend on traffic over time and displaying details on the top applications is the tough part. Speed of retrieval is what really matters. So, make sure you test this.

- Flow sequence numbers: Are you sure the collector is saving and storing all the flows it receives? Plixer Scrutinizer® allows you to view the missed flow sequence numbers (MFSN) per exporting router. This is important because you need to know if flows are being dropped and, if so, whether the exporter, the network or the collector is dropping them.

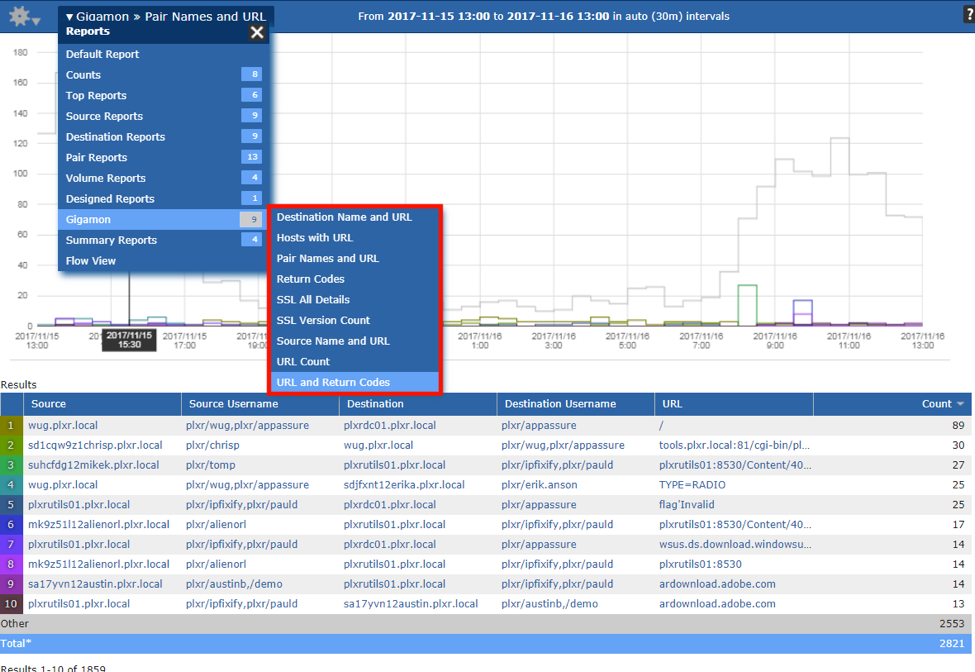

- Reporting and filtering: These two go hand and hand. To narrow down your analysis to a specific event, it’s key to change the report to get a different angle on the traffic patterns. Data filtering is a critical part of that process – with excluding and including certain IP addresses, ports and protocols as part of any troubleshooting effort. The ability to filter on any exported data element – for example, URL, hostname, latency, packet loss – will shorten the Mean-Time-To-Know (MTTK).

- Distributed collection: Large-scale and diverse environments require a solution that supports the deployment of multiple collectors to help prevent traffic from plugging up WAN links. Reporting should allow for data accuracy and transparency across the distributed architecture. Scale and distributed collection allows the largest enterprises to collect millions of flows per second.

- Metadata: The integration and correlation of flows with third-party data sources is paramount. Metadata from authentication systems allows us to associate usernames with IP addresses. Logs from the DNS allow systems to correlate Fully Qualified Domain Names (FQDNs) with IP addresses to resolve traffic to Amazon AWS or Akamai Technologies traffic.

- An open approach: This goes hand and hand with gathering metadata from any vendor, but can also mean working with solutions, such as Grafana, which often require well-documented APIs.

Many other features play a major factor in selecting the ideal solution. If you need help deciding on the best NetFlow Analyzer for your organization, check out the Gigamon-Plixer joint solution brief.

Featured Webinars

Hear from our experts on the latest trends and best practices to optimize your network visibility and analysis.

CONTINUE THE DISCUSSION

People are talking about this in the Gigamon Community’s Security group.

Share your thoughts today