Selecting Security Tools: Smart Network Segmentation and Intelligent Tool Routing

As mentioned in my last blog, the first step to solving the security tool selection problem is asking the right questions and taking inventory of exactly what you do. For instance, when it comes to your unique network:

- Do you handle credit cards? Are you subject to Payment Card Industry (PCI) regulations?

- Do you handle Personal Identifiable Information (PII) in Europe that is in scope with the General Data Protection Regulation (GDPR)?

- Do you build computer things? Do your engineers do stuff that could “look suspicious”?

- Do you have a small number of things you really need to secure, but the rest can burn?

In my world, I know that:

- We build network security tools.

- Our engineers do a lot of devtest work that “looks suspicious.”

- We run traffic generators that create fake network behavior.

- We run a lot of testing side by side with production traffic.

- We have multiple clouds.

While our jobs entail much more, these tasks play directly into our tool selection process. Fortunately for me, I’ve been given carte blanche at Gigamon to test whatever tools I want on my live network. In fact, seeing how tools have failed in my network is what led me down the bespoke train of thought. To give some concrete examples:

- UBA tools firing dozens of alerts per day.

- NIDS tools failing to detect files fast enough due to excessive, irrelevant, traffic.

- Metadata alarms triggering at all hours.

- Security Information and Event Management (SIEM) systems failing on unusual Transmission Control Protocol (TCP) parameters.

As a research and development shop that builds security tools, we do a lot of stuff that other security tools would consider bad. For example, if I were to deploy a UBA and one of its criteria is “Secure Shell (SSH) is suspicious,” I’m going to get several alerts or need to do a ton of whitelisting that may even increase my risk, or a combination of both. Likewise, if you happen to be downloading malware samples that pass through your NIDS … well, as they say, “Hang on tight.”

By contrast, if you’re a retail organization, where SSH on your network would be suspicious since it’s probably something only a few of your administrators are doing, perhaps for you, a UBA might drop in right out of the box and be an effective tool in your security posture.

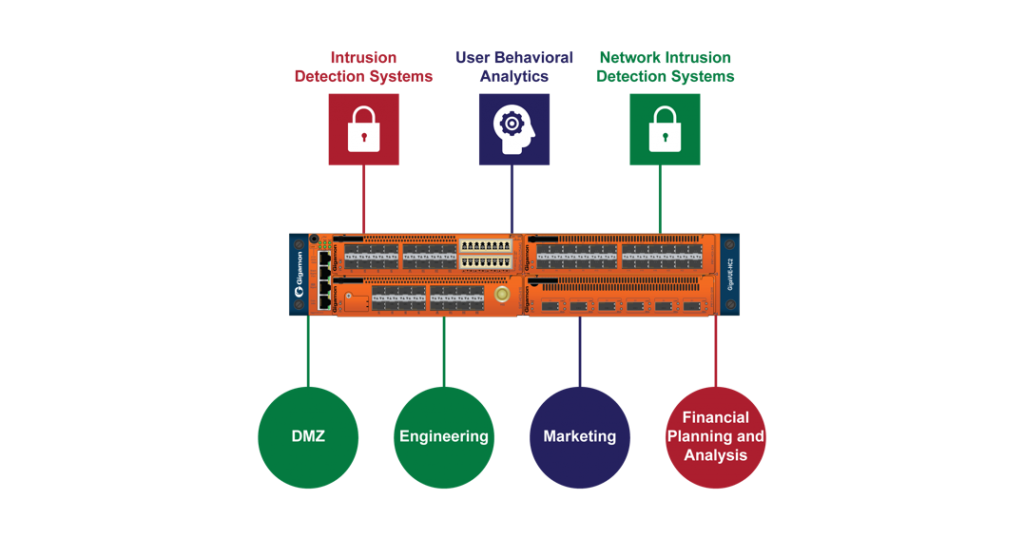

Or what if your business is somewhere in between – like you are developing tools in a cloud environment, but also have normal business operations like financial planning and accounting (FP&A) or human resources (HR)? In that case, tool selection gets more complicated. Each of your supported groups will likely have different threat models that need to be monitored differently – call it bespoke monitoring to go with our bespoke network. How do you secure those FP&A and HR people while not burning through Security Operations Center (SOC) analysts because of the pile of false positives coming out of your development team? How do you secure that team?

The answer: network segmentation and tool routing.

Strengthen Your Security Posture with More Nuanced Network Segmentation

Yes, I hear you, “I’ve got a DMZ and a management zone, I’m segmented already.” I challenge that you can do better and grow your security posture with even more nuanced segmentation. Segmentation is generally viewed as a method to contain lateral movement, but I claim that we can expand this definition to:

Network segmentation: a strategy to contain lateral movement and provide situationally targeted security monitoring.

By recognizing the different behavioral scopes previously discussed, you can start segmenting based on security requirements. This isn’t a new concept. In James Rome’s paper “Enclaves and Collaborative Domains,” he notes that segmentation “is required when the confidentiality, integrity or availability of a set of resources differs from those of the general computational environment.” Does that sound familiar? You can solve this problem when you move into a micro-segmented environment – part one the solution. Part two is getting the data from each segment to the correct tools.

Figure 1 shows how micro-segmentation looks at Gigamon – well, a bit. I’ve purposefully not added much detail so y’all don’t attack me, but it does show that we’re considering tools based on how to properly monitor each group. For example, with my HR and FP&A teams, a UBA could be very effective at finding unusual behavior such as SSH on the segment or unusual client-client interactions. On the lab network, however, the UBA would likely gurgle blood while an NIDS looking for file hashes could be useful since even in testing I don’t expect engineers to be shipping malware around. Additionally, for tools that are of uniform use, like my SIEM, I can route all traffic to it. In my real use case, I don’t do this because I find that targeted metadata is easier to work with and so instead, I generate that off all my traffic and route the details that interest me into my SIEM for intel bumping and alerting.

Through a combination of smart segmentation and selective traffic routing to tools, you can gain much better visibility into your network and have high-fidelity data to work with. This approach allows you to better pair tools with workloads, which in the long run, can lower your tool expenditure since you may need less boxes to cover the various targeted segments. What’s more, with intelligent tool routing, you can also skip sending traffic you have whitelisted – for example, Netflix – from even hitting the tools, thus lowering the overall tool spend as your network scales up.

Related reading materials:

- SANS Institute white paper, “Secure Network Design: Micro Segmentation”

- SANS Institute white paper, “Design Secure Network Segmentation Approach”

- Sage Data Security blog, “Network Segmentation: Considerations for Design”