What Modern Infrastructure Monitoring Requires

Most infrastructure monitoring tools were easier to define and control: centralized data centers, monolithic applications, and clear lines between infrastructure, network, cloud, and security teams. Today, organizations operate across on‑premises, hybrid, and multi‑cloud environments, with containers, microservices, and encrypted traffic woven into nearly every transaction.

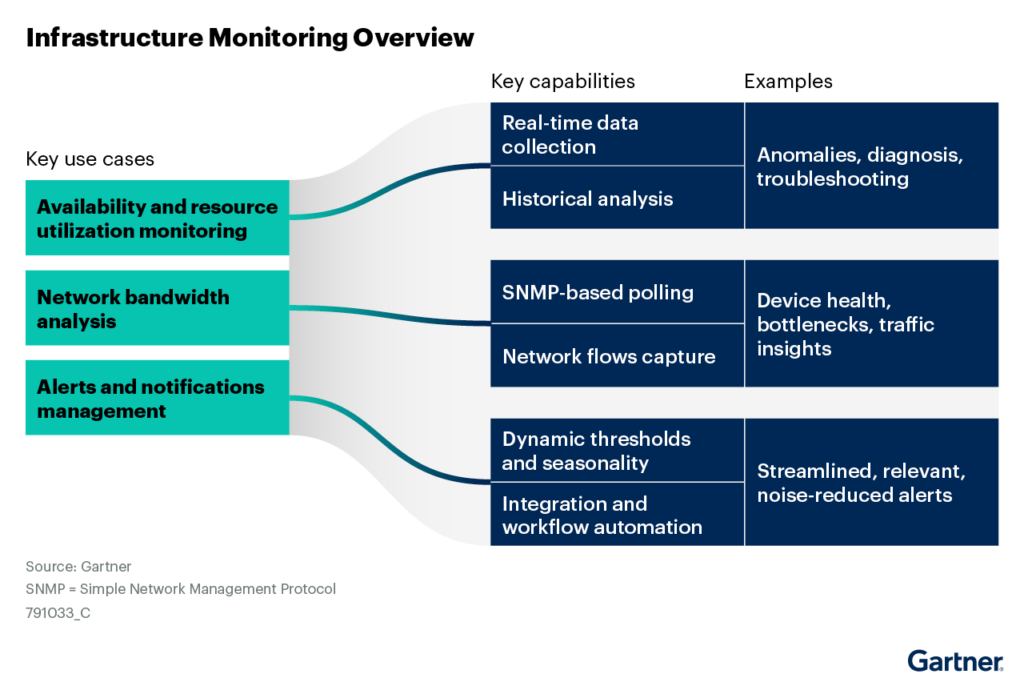

Industry analysts reinforce this expanded scope. As Gartner® notes:

“Infrastructure monitoring tools capture the health and resource utilization of IT infrastructure components wherever they reside (e.g., in a data center, at the edge, or IaaS or PaaS in the cloud). This enables I&O teams to monitor the availability and resource utilization data of physical, virtual, software entities, and AI systems — including servers, containers, network devices, database instances, hypervisors, storage, and basic application monitoring. These tools collect data in near real time and perform historical data analysis or trending of the elements they monitor.”1

Host and log‑centric tools remain essential, but they cannot provide a complete view of availability, performance, and risk on their own. Infrastructure leaders need to know whether applications are reachable, services are performing as expected, and if teams can return to normal operations quickly when issues arise.

The Gigamon Deep Observability Pipeline addresses this gap by delivering a network‑centric visibility layer for the infrastructure monitoring tools organizations already use. With GigaVUE-FM (fabric manager), teams can generate enriched, high‑fidelity network-derived telemetry that supports monitoring, alerting, and analytics across hybrid cloud infrastructure. In modern environments, this visibility layer is a critical component of an effective infrastructure monitoring architecture and a foundation for resilient digital services.

From Metrics Collection to Network-Derived Ground Truth

Traditional monitoring stacks rely on agents, logs, and metrics to track CPU, memory, and process metrics, and other infrastructure conditions. That data is necessary, but during an incident it often does not answer the questions that matter most:

- Can users actually access the service?

- Is the issue in the network, application, cloud, or supporting infrastructure?

- How quickly can teams identify root cause and restore service?

This shift is already happening across enterprises. As Gartner explains:

“As mature enterprises adopt cloud-native architectures, I&O teams have shifted their focus from basic troubleshooting toward root cause analysis in order to enhance resilience.”2

Yet traditional monitoring approaches often lack the data needed to answer those root cause questions in real time.

Gigamon adds a bottom‑up view by processing packets and flows across physical, virtual, and cloud networks, then transforming that traffic into structured telemetry that infrastructure monitoring and observability tools can consume.

The impact is clear: operations teams gain a neutral source of truth for what is happening across the network. Existing tools receive more complete, accurate, and deduplicated data and teams can reduce time spent reconciling conflicting dashboards during high-pressure incidents.

Improving Infrastructure Visibility Across Hybrid Cloud Environments

Infrastructure monitoring buyers expect visibility into availability and operating conditions across servers, networks, storage, hypervisors, databases, containers, and cloud services.

Across network, cloud, container, and application environments, Gigamon helps teams observe traffic presence, flow health, retransmissions, drops, latency, resets, timeouts, throughput, and request/response behavior. These signals do not replace CPU, memory, disk, storage, or hypervisor utilization metrics. Instead, they complement them with network-level evidence of how services are actually communicating across hybrid infrastructure.

In business terms, Gigamon gives teams network‑derived ground truth for service availability, helping them detect blind spots, accelerate investigation, and improve the quality of the data flowing into their monitoring tools.

Real‑Time Infrastructure Monitoring and Historical Analysis

In our view, infrastructure monitoring can be viewed as a pipeline of capabilities that transform raw data into actionable insight—combining real-time data collection, historical analysis, network-level visibility, and alerting to support diagnosis, troubleshooting, and operational decision-making.

We believe this model highlights an important limitation: the quality of insights depends entirely on the quality of the underlying data. If telemetry is incomplete, delayed, or inconsistent across domains, even the most advanced analytics and alerting systems will produce gaps, noise, or conflicting signals.

Infrastructure and platform teams need real-time visibility during incidents and reliable telemetry for historical analysis, trend reporting, capacity planning, and SLA management. Gigamon supports this requirement in a way that keeps architecture simple and cost‑effective.

GigaVUE‑FM processes live packet and flow data at scale and generates structured metadata, including flow records, session details, and application attributes. Infrastructure monitoring tools can then use that telemetry to analyze latency, jitter, packet loss, throughput, connection rates, and application or transaction response characteristics.

Gigamon does not need to be the metrics store, dashboard, or analytics engine. Its role is to deliver high-fidelity network-derived telemetry so monitoring platforms can deliver better analytics, visualization, and reporting.

Improving Alert Accuracy With Network-Derived Telemetry

Alert fatigue wastes time and obscures real risk. GigaVUE‑FM is not the primary alert engine; it improves alert quality by supplying filtered, enriched, and deduplicated telemetry to the monitoring platforms that already generate alerts. With better input data, those platforms can generate more reliable threshold- and anomaly-based alerts for latency, packet loss, traffic anomalies, and suspicious activity.

For visualization, Gigamon provides packet-level visibility into the network topology and traffic flows, while tools such as Splunk, Elastic, Grafana, Datadog, and Dynatrace deliver dashboards, trends, and capacity views using Gigamon network-derived telemetry.

Role‑based access control and selective traffic distribution in GigaVUE‑FM help network, security, and cloud operations teams access the data relevant to their tools and workflows. That supports stronger governance and faster coordinated response.

A Critical Visibility Layer for Modern Infrastructure Monitoring

GigaVUE‑FM is not a host‑based metrics collector and not a replacement for infrastructure monitoring platforms. It delivers authoritative, network‑derived telemetry that helps teams understand true service availability and performance across hybrid and cloud environments.

By delivering cross‑domain, packet‑level visibility and helping existing tools operate on complete, accurate, and noise‑reduced data, Gigamon serves as a critical visibility layer within the Infrastructure Monitoring Architectures. The result: fewer blind spots, faster incident resolution, and more resilient, compliant digital services.

CONTINUE THE DISCUSSION

People are talking about this in the Gigamon Community’s Security group.

Share your thoughts today

1-2 Source: Gartner, Market Guide for Infrastructure Monitoring Tools, Pankaj Prasad, Martin Caren, Neil Young, Aparna Bhaumik,13 April 2026. GARTNER is a trademark of Gartner, Inc. and/or its affiliates.